It converges to a loss of 0.5-0.6 and it just predicts the whole output to be background. The problem is that this isn't really working.

You may use CrossEntropyLoss instead, if you prefer not to add an extra layer. My model is nn.Sequential () and when I am using softmax in the end, it gives me worse results in terms of accuracy on testing data. Obtaining log-probabilities in a neural network is easily achieved by adding a LogSoftmax layer in the last layer of your network. > batch_size = 5, #classes = 2, picture = 384x384 and 81 pictures deep. This loss combines Dice loss with the standard binary cross-entropy (BCE) loss that is generally the default for segmentation models. For the loss, I am choosing nn.CrossEntropyLoss () in PyTOrch, which (as I have found out) does not want to take one-hot encoded labels as true labels, but takes LongTensor of classes instead. using PyTorch autograds loss.backward(): through the cross entropy loss, 2nd linear layer, tanh, batchnorm, 1st linear layer, and the embedding table. I have to do some dimension changes with the groundtruth since nn.CrossEntropyLoss() expects the groundtruth to be of size (5, 384, 384, 81), while the output is of size (5, 2, 384, 384, 81) Gt_temp = torch.tensor(np.zeros((output.shape, output.shape,output.shape, output.shape))).long() Right now my implementation looks like this: def loss(output, gt): It is a type of loss function provided by the torch.nn module. It creates a criterion that measures the cross entropy loss. The model and loss function work in the native Pytorch implementation. Land-cover products derived from historical aerial orthomosaics acquired decades ago can provide important evidence to inform long-term studies. 1) Adding 3 more GRU layers to the decoder to.

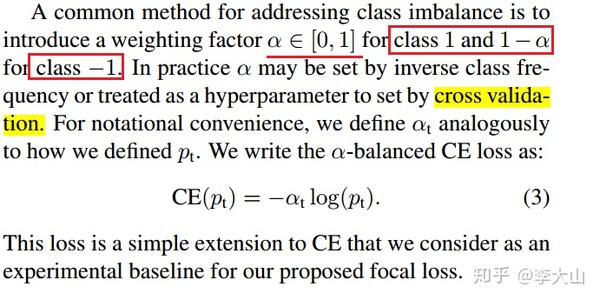

Now I want to compute the cross entropy loss. PyTorch Server Side Programming Programming To compute the cross entropy loss between the input and target (predicted and actual) values, we apply the function CrossEntropyLoss (). Multitemporal environmental and urban studies are essential to guide policy making to ultimately improve human wellbeing in the Global South. #classes is equal to two right now and the two dimensions are pretty much just the inverse of one another since I only have the background class and one foreground class right now. From the linked document, I think the current CrossEntropyLoss in PyTorch only supports the linear combination between the original target and a vector of.

Input channels is equal to one since I only have greyscale images and depth is according to the number of 2D pictures that make up the 3D volume. The input into my network when training is of the shape. I have either background class or one foreground class, but it should have the possibility to also predict two or more different foreground classes. Another way to customize the training loop behavior for the PyTorch Trainer is to use. I am building a network that predicts 3D-Segmentations of Volume-Pictures. Here, we will use cross-entropy loss, for example, but you can use any loss function from the library. CrossEntropyLoss(weighttorch.tensor(1.0, 2.0, 3.0)) loss. I know that the CrossEntropyLoss in Pytorch expects logits.I have a question regarding an optimal implementation of Cross Entropy Loss in my pytorch - network.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed